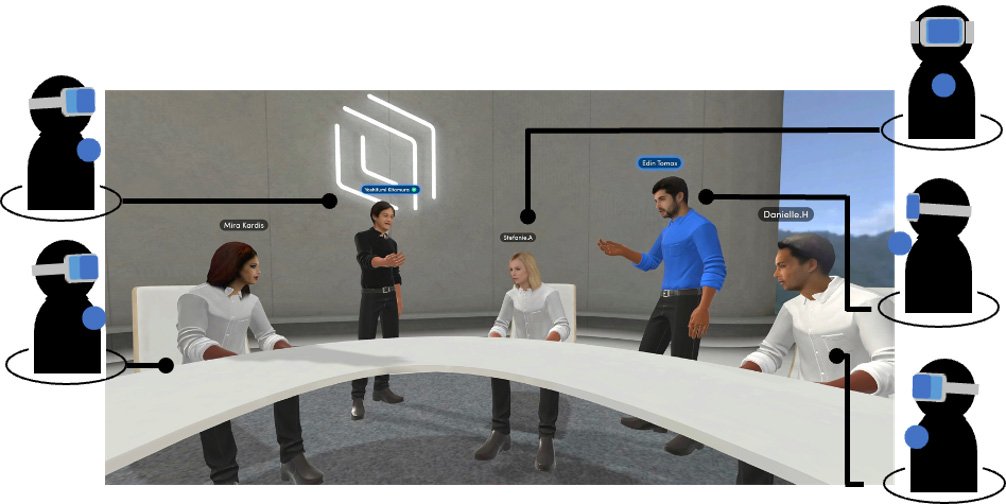

Tohoku University's Research Institute of Electrical Communication (RIEC) has established a new, interdisciplinary research center that will focus its efforts on breaking down communication barriers between the real and virtual world. Officially called the New Interdisciplinary ICT Research Center for Cyber and Real Spaces, the center plans to synthesize AI research, psychology and brain sciences, human-computer interactions, VR/AR/MR communication technologies, and security and network platform technologies.

Telecommuting and online meetings were on the rise pre-COVID-19, but the pandemic has accelerated this trend. While online communication tools lend themselves to conveying verbal and written information, i.e., what we say, they struggle to transmit the various forms of nonverbal information that is essential for fluid communication, i.e., how we say things.

Common examples of nonverbal information include facial expressions, body language, gestures, eye contact, clothing, and other physical cues that convey emotion, attitude, and intention.

"COVID-19 ramped up the use of online meetings, but we noticed workers suffering from decreased work efficiency as a result of poor communication," says Yoshifumi Kitamura, the director of the research center and deputy director of RIEC. "The strong preference for face-to-face meetings led us to ponder what differentiates them from online communication, with one of the most important causes being online meetings' inability to convey nonverbal information. Hence why we set up this research center."

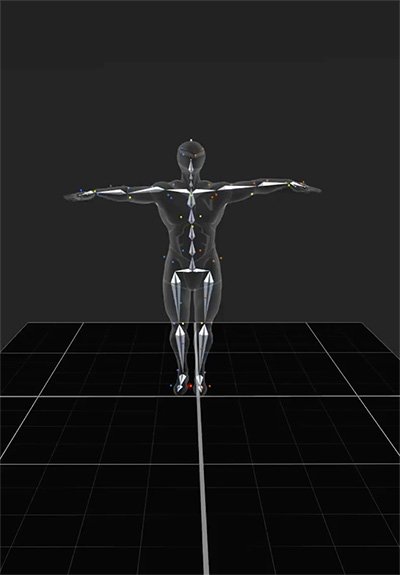

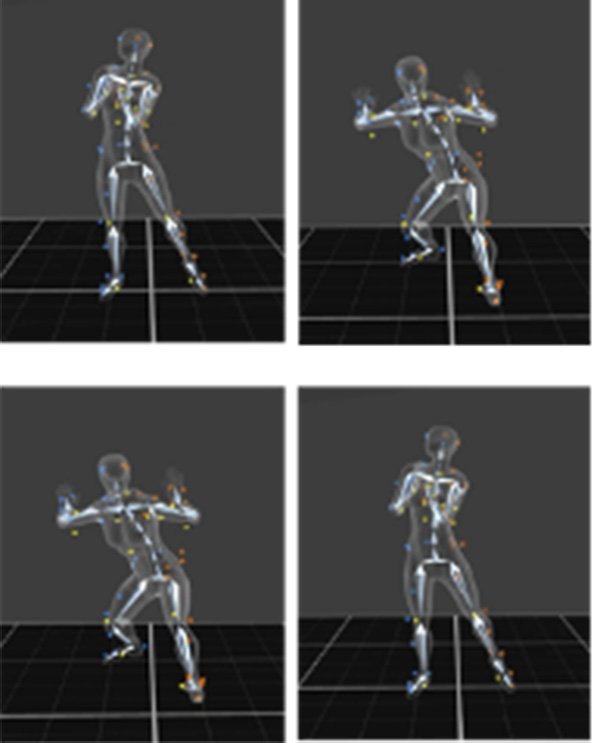

Already, the center is involved in promulgating a motion unit AI that maps and generates emotionally rich movements. To do so, researchers have been working with 100 actors, 50 from Taiwan and 50 from local acting groups in Sendai, to record more than a dozen emotions by using 12 motion capture cameras from varying degrees. In total, 11,100 movements have been collected.

Assistant Professor Miao Cheng, who is working on the project, says that the Facial Action Coding System (FACS), which was popularized in the American crime drama Lie to Me, serves as an easy example for understanding motion unit AI. "The FACS is well-established and enables people to derive emotions based on facial muscles. What we are trying to do is generate a coding system based on body movements. Thanks to the talented crew of actors, we could capture basic human emotions based on body movement. We will use AI to extract the signature motion unit that represents certain emotions like joy, sadness, fear, etc. and feed it into a database."

Dr. Chia-huei Tseng, an associate professor at RIEC, also points out that this will be the first motion data base in Asia.

"AI that generates emotions draws most of its data from North America or Europe, meaning Asia is underrepresented. Our collected data accommodates culturally specific emotions and will make the aggregate data less bias."

AI drawn information from the database creates more humanized avatars and can even help graphic designers by generating more human-like animated characters without having to have actors don motion capture suits and mimic movements. Even when motion capture suits are needed, body parts sometimes obscure the sensors, resulting in jittery animation. Cheng and her team are currently developing an algorithm that will rectify such noise.

Kitamura believes that AI can do more than just facilitate a smoother online meeting; it can also make online communication platforms more accommodative.

"Physical disabilities such as blindness or deafness render it incredibly difficult for a person to convey and understand nonverbal cues. With further innovations, we could potentially use an avatar or robot to express emotions on their behalf or interpret another person's emotions and convert it into a medium through which they can understand."

The Interdisciplinary ICT Research Center for Cyber and Real Spaces comprises 20 full-time and adjunct researchers and is currently hiring additional researchers.

Contact:

Yoshifumi Kitamura,

Research Institute of Electrical Communication

Email: kitamura riec.tohoku.ac.jp

riec.tohoku.ac.jp

Website: https://www.cr-ict.riec.tohoku.ac.jp/